The sun produces more than 100 trillion times all of humanity’s electricity. In orbit, solar panels can be eight times more productive than their counterparts on Earth, producing energy almost continuously without the need for heavy battery storage. These facts led a team of Google researchers to ask, what if the best place to scale artificial intelligence wasn’t on Earth at all, but in space?

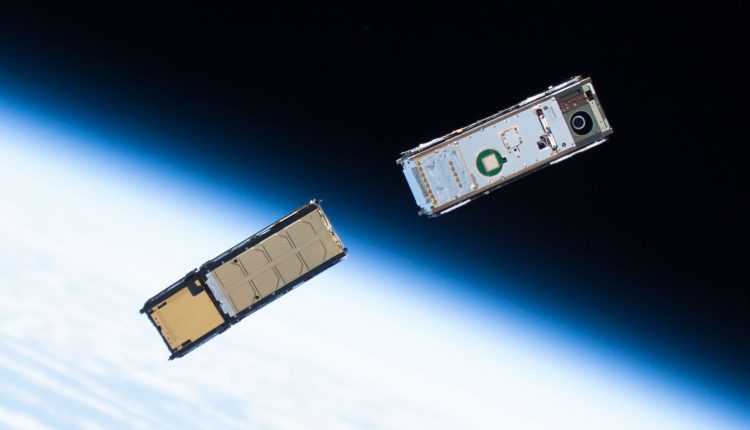

Project Suncatcher, Google’s latest space mission, envisions constellations of solar-powered satellites equipped with processors and connected via laser-based optical links. The concept addresses one of AI’s most pressing challenges, the enormous energy demands of large machine learning systems, by directly tapping the solar system’s ultimate energy source. A new research paper published by Google details their progress in overcoming the technical challenges.

This artist’s concept shows the Optical Payload for Lasercomm Science (OPALS) and its laser that will beam data from the International Space Station to Earth. The same concept will be used to connect satellites in the new space-based data centers (Source: NASA/JPL)

The proposed system would operate in a sun-synchronous low-Earth orbit, in which the satellites are exposed to near-constant sunlight. This orbital choice maximizes solar energy collection while minimizing battery requirements. However, to make a space-based AI infrastructure viable, several daunting technical challenges must be solved.

The first is to achieve data center-scale communication speeds between satellites. Large machine learning workloads require tasks to be distributed across numerous processors with high-bandwidth, low-latency connections. To deliver performance comparable to Earth-based data centers, connections between satellites capable of supporting tens of terabits per second are required. Google’s analysis suggests that this should be achievable using dense wavelength division multiplexing and spatial multiplexing technology, but only if the satellites fly in extremely close formation and are only a few kilometers or less apart. The research team has already validated this approach with a laboratory-scale demonstration, successfully achieving a total transmission of 1.6 terabits per second.

Flying satellites in such a tight formation presents its own challenges. With their planned altitude of around 650 kilometers, satellites less than a kilometer apart would have to be carefully controlled in orbit. Google developed sophisticated physics simulations to analyze how Earth’s nongravity field and atmospheric drag would affect these densely packed constellations. Their models suggest that only modest position-holding maneuvers should be required to maintain stable formations.

Artist’s impression of the STEREO Observatory spacecraft during solar panel deployment. Satellites in orbit for data center operations must provide information at low altitudes and therefore require careful orbital control (Source: NASA/Johns Hopkins University Applied Physics Laboratory)

Perhaps surprisingly, Google’s TPU processors appear to be remarkably resilient to space conditions. Tests of their Trillium v6e Cloud TPU showed that the chips could withstand cumulative radiation doses nearly three times higher than expected over the course of a five-year mission before irregularities occurred. The high-bandwidth memory systems were found to be the most sensitive, but only appeared after doses of 2 kilorads, well above the expected 750 rads for a shielded five-year mission. Whether this all makes financial sense depends heavily on whether the implementation costs continue to fall. Google’s analysis suggests that further improvements in launch technology could reduce costs to below $200 per kilogram by the mid-2030s. At this price, the commissioning and operation of a space-based data center could be roughly comparable to the energy costs of an equivalent earth-based facility.

Source: Exploring a space-based, scalable AI infrastructure system design

Comments are closed.